Published on

Apr 13, 2026

AI isn't just changing SaaS features. It's changing what users expect.

Five years ago, using enterprise software felt like operating a control panel: open the dashboard, slice the data, click the button, export the file, and then interpret the result.

Today, more and more users don't want to operate the system.

They want to state the outcome and have the software figure out the rest.

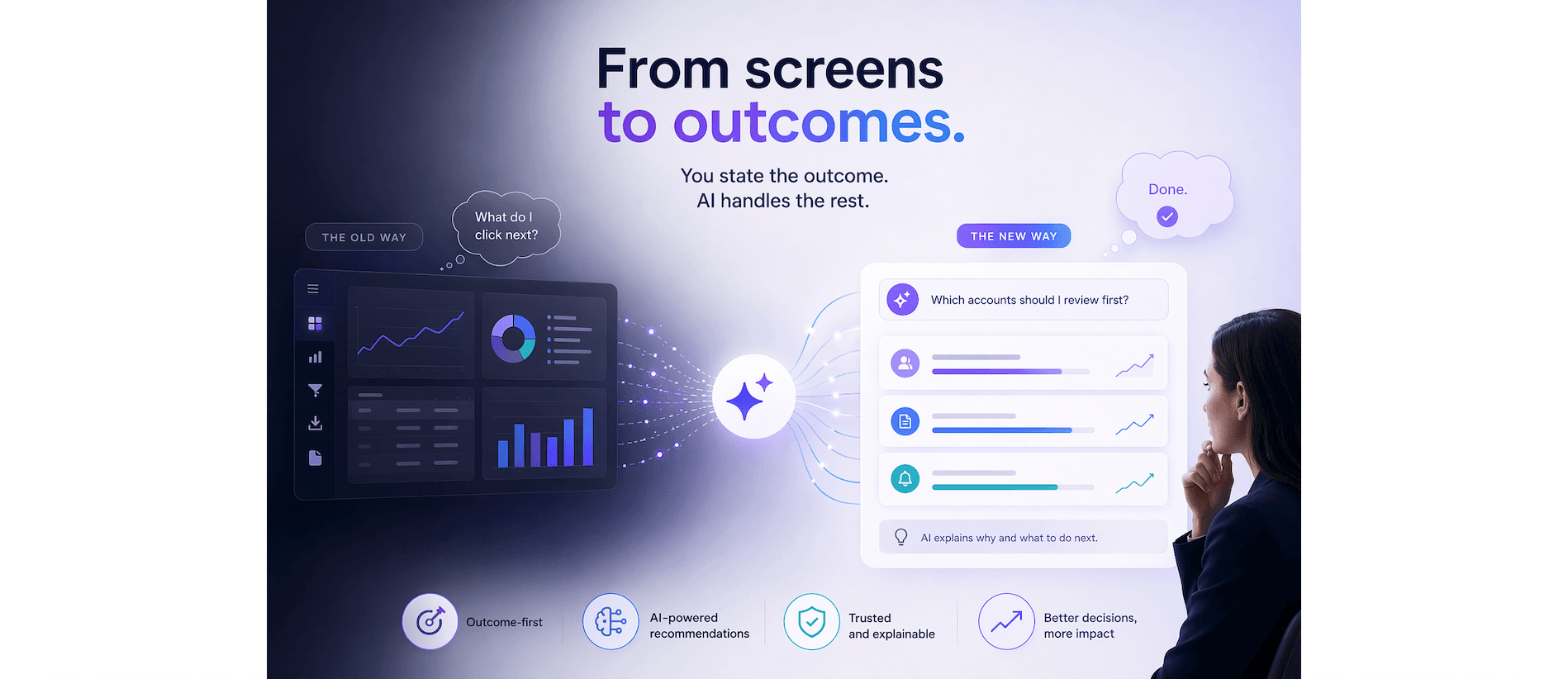

From screens to outcomes

AI is quietly shifting SaaS design from "what buttons do I click?" to "what do I want to achieve?"

That means less time spent hunting for filters and more time spent on the decision itself.

In wealth management, fintech, and operations tools, this looks like:

- Asking, "Which accounts should I review first?" instead of manually building a watchlist.

- Telling a system, "Prepare a client-ready summary for this portfolio," instead of stitching together exports and spreadsheets.

- Getting proactive alerts that surface why something changed, not just that it changed.

Designers are no longer just arranging screens; they're designing intent-driven workflows.

Trust, control, and the "invisible" UI

With that shift comes a new design challenge: trust.

If the system does the work, users need to know:

- Why it did that.

- How they can correct it.

- When it's safe to let it run on its own.

The best AI-driven SaaS products don't bury complexity; they make it visible exactly when it matters.

Think:

- A simple "Explain this analysis" button.

- A quick way to manually override or fine-tune recommendations.

- Clear, language-based audit trails that show what data the system used.

In short: the UI is becoming lighter, but the behavior is richer.

What this means for product teams

For product managers, designers, and engineers, this means:

- Starting with outcomes, not features.

- Designing for gradual autonomy (assisted → automated, not fully autonomous by default).

- Building feedback loops that let the system learn from real usage, not just offline data.

AI isn't making SaaS "smarter" just because we add models.

It's forcing us to rethink who owns the complexity—and to put the heavy lifting where it should be: in the software, not in the user's head.

Where have you seen this shift most clearly in the tools you use today?