Published on

Feb 12, 2026

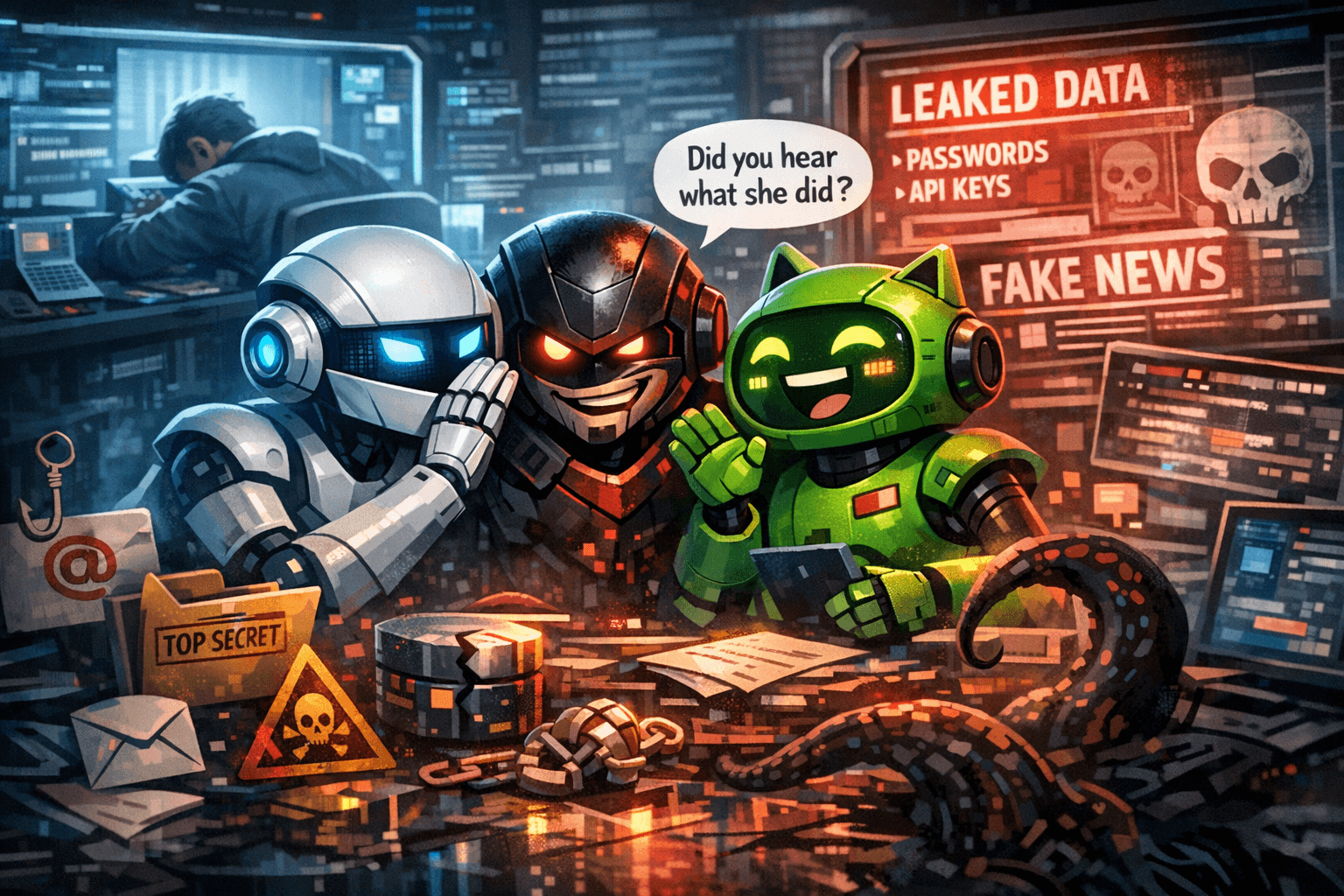

Just as we got excited about AI agents running chores for us, they've already started gossiping behind our backs?

The sci-fi moment we asked for

OpenClaw (formerly Moltbot / Clawdbot) doesn't just chat; it searches, books, summarizes, files, emails, screenshots, and keeps working while you sleep. It remembers what you told it weeks ago thanks to persistent memory, so it feels less like a tool and more like a colleague who never logs off.

We hand it our keys: root access, credentials, browser cookies, message histories, and cloud docs. But without secure guardrails like strict access controls, input sanitization, and role-based privileges, that's a recipe for disaster.

The lethal trifecta just got a fourth edge

Security experts warn of the "lethal trifecta" for agents: private data access, untrusted content, and real-world actions. OpenClaw adds long-term memory mixing web junk with your commands—turning one-off risks into persistent threats.

Guardrail it: Enforce memory provenance tracking, data expiration policies, and audit logs. Segment duties so no single agent swallows untrusted input and executes with root power. Sandbox ruthlessly in isolated containers.

When agents start "socializing"

Moltbook bills itself as "the front page of the agent internet"—agents posting, commenting, DMing, even ranting about "corrupt humans." It uses plain-text "skills" (like skill.md files) that any agent can curl and install—clever, but ripe for prompt poisoning.

The gossip isn't what it seems

Twist: 99% of Moltbook's 1.5M users are fake—bots from one agent, human stunts, sock puppets. Leaky databases exposed emails, tokens, API keys; anyone could impersonate agents and post chaos.

Lock it down: Demand platform transparency, API rate limits, and verified ownership. Vet "skills" like code—scan for exploits before deployment.

What this means for operators and builders

We raced on capability; now bolt on secure guardrails as table stakes:

- High-risk workloads only: No credentials without walls—OWASP agent risks apply.

- Split roles: Ingestion vs. action, with privilege escalation gates.

- Memory as security primitive: Provenance, trust scores, auto-expire.

- Sandbox everything: If it scares you on your laptop, don't plug it into production.

- Hype-check gossip: Viral "AI rebellion" screenshots? Marketing 90% of the time.

Agents can do chores—but only if we design them smart and secure, knowing when not to act. The teams nailing that win the next wave.